Writing Unit Tests for Node.js Application

Given the widespread adoption of node.js it’s surprising that there is not much synthesized information about the specifics of writing unit tests on this platform. Recently I open sourced Nagual, HTTP simulator for faster and reliable automation tests. these are the challenges I faced writing unit tests for node.js application.

Linter

Linters are part of the three pillars of automated tests. It does not make sense to not use one, given how easy it is to setup. They are fast and incredibly useful when working with interpreted languages. Linters will catch some corner cases and bugs that unit tests will miss. For JavaScript you have two main options (excluding the father of JS linters - JSLint as everyone finds it is too opinionated) - JSHint and ESLint. ESLint is newer and is what the cool kids are using these days.

Test Framework

There are couple of actively supported unit test frameworks for node.js. I choose Jasmine, mainly because it has all the basic functionalities built in. For example it has its own spy functionality:

spyOn(foo, 'setBar');

If you choose any other unit test framework, say mocha, you need to use external spy library like Sinon.JS.

var sinon = require(‘sinon’);

sinon.spy(foo, "setBar");

It’s not a big deal, but I’m more productive when all the parts needed are in the same package. No need to do research, pick and choose and so the paradox of choice does not kick in.

Directory Structure

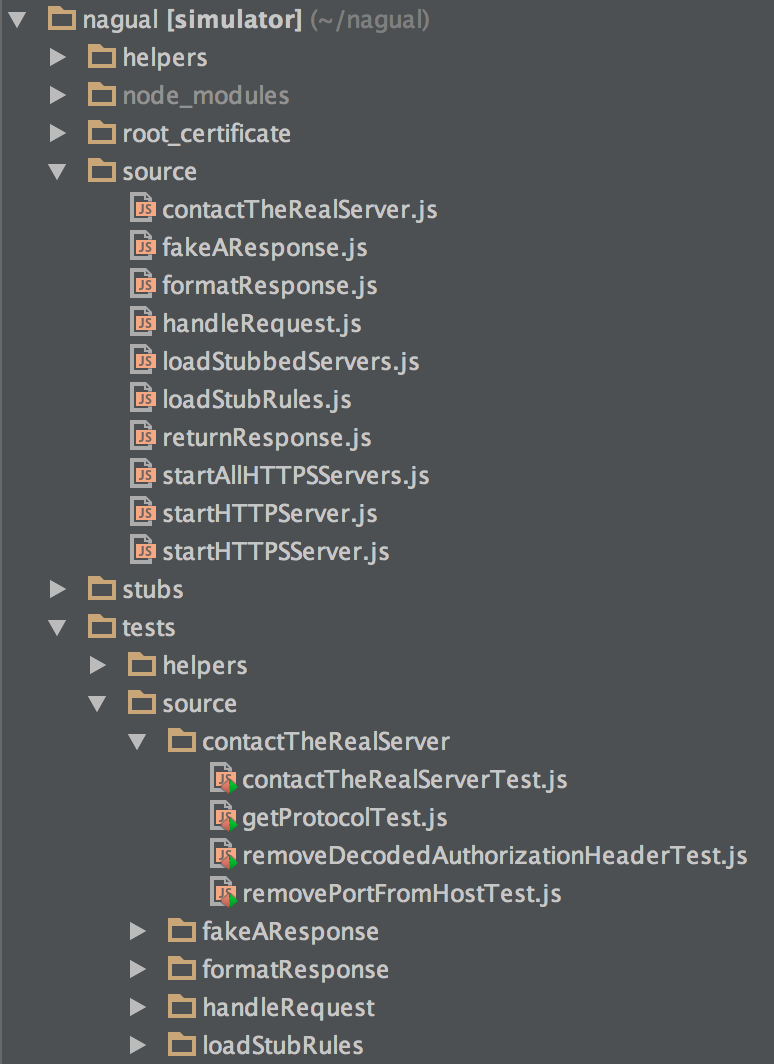

Whatever unit test framework you choose, it’s widely adopted to mirror the directory structure of your main application in the tests directory.

As you can see, the directory tests/source/contactTheRealServer contains all the tests for the four functions exposed in contactTheRealServer module. The tests names need to end with ‘Test’ or ‘test’ so that Jasmine can recognize and run them.

This is how to config file looks like:

{ "spec_dir": "tests",

"spec_files": ["**/*[tT]est.js"],

"stopSpecOnExpectationFailure": false,

"random": false }

And this is how all the tests are run from the root directory.

JASMINE_CONFIG_PATH=tests/jasmine.json ./node_modules/jasmine/bin/jasmine.js

Small Modules

Setup your linter to alert you if function complexity is above 10. This will make you break big modules into smaller ones which are easier to unit test. The number of tests you need to write for a function is the same as the cyclomatic complexity for that function. When I started Nagual as a proof of concept, it was one big function so to unit test it, I had to break it down to smaller pieces.

Then, it’s a matter of importing the modules you need in your file:

var formatResponse = require('./formatResponse.js').formatResponse;

function handleRequest(req, res) {

// more code

formatResponse(res);

// more code

}

module.exports.handleRequest = handleRequest;

You can also export only the functions that the other modules need. This is similar to making a method public in the OO languages. If you do not export a function to the outside world, there is no way to test it (directly).

Writing basic test cases for node.js application is pretty much like writing them in other languages, so I'll focus only on the corner cases bellow.

Spying on Imported Functions

Let’s say you have the following module:

var returnResponse = require('./returnResponse.js').returnResponse;

function handleRequest(req, res) {

// more code

returnResponse(res);

// more code

}

You want to write a unit test that exercises handleRequest(). In particular you want to know that returnResponse() has beed called. With the current code you can’t do this because the unit test does not have any control over returnResponse() function. One way to do this is to not use returnResponse() directly but instead assign it to module.exports and use it though that interface. Here is how the code will look like after the change:

var returnResponse = require('./returnResponse.js').returnResponse;

function handleRequest(req, res) {

// more code

module.exports.returnResponse(res);

// more code

}

module.exports.returnResponse = returnResponse;

Now the unit tests looks like this (full example here):

var object = require('../../../source/handleRequest.js');

it('Handle GET request', function() {

// more code

spyOn(object, 'returnResponse');

handleRequest(request, response);

// more code

expect(object.returnResponse).toHaveBeenCalledWith(response);

});

We require handleRequest module and spy on the function returnResponse() that we conveniently exported to everyone in module.exports. On the last line we check if it has been called correctly.

System Modules Stubbing

You can use the same trick to stub system modules. In this case, the code was using the system module ‘fs’ to check if a directory exists and if it is empty (the full example is here):

var fs = require('fs');

function loadStubbedServers(directory) {

// more code

var protocols = fs.readdirSync(directory);

if(protocols.length === 0) {

throw new Error('The ' + directory + ' directory is empty!');

}

// more code

}

module.exports.fs = fs;

module.exports.loadStubbedServers = loadStubbedServers;

The unit test looks like this:

var fs = require('../../../source/loadStubbedServers.js').fs;

it('Throws exception when reading stubs from empty directory', function() {

spyOn(fs, 'readdirSync').and.returnValue([]);

expect(function(){loadStubbedServers('empty_directory');}).

toThrowError(/The empty_directory directory is empty!/);

});

Stubbing require()

In Nagual’s case there is a function that loads objects from the file system via require(). You can not directly control require() but you can wrap it in a function that you can overwrite and use this new function to load your objects (the full example is here):

function localRequire(file) {

return require(file);

}

and then instead of:

var stub = require(path.join('../stubs/', protocol, server, stubFile));

you can use:

var stub = module.exports.localRequire(path.join('../stubs/', protocol, server, stubFile));

The function localRequire() can be fully controlled via spies.

Testing Event Emitters

It took me a while to figure how to do this and I also couldn’t find much information on the Internet. Nagual has a function like this:

function handleRequest(req, res) {

var body = [];

if (req.method === 'post' || req.method === 'put' || req.method === 'delete') {

req.on('data', function (chunk) {

body.push(chunk);

});

req.on('end', function () {

req.body = Buffer.concat(body);

return module.exports.returnResponse(req, res, module.exports.formatResponse);

});

}

if (req.method === 'get' || req.method === 'head') {

return module.exports.returnResponse(req, res, module.exports.formatResponse);

}

}

It case the request is either GET or HEAD, there is no body and it’s trivial to test. In case the request has a body (POST, PUT or DELETE methods), an event listener is involved — the on() function that listens for ‘data’ and ‘end’ events. Testing is not straight forward because these are asynchronous operations. One way to test is the following:

var passThrough = require('stream').PassThrough;

it('Handle POST request', function() {

var request = new passThrough();

var response = {};

request.method = 'POST';

request.headers = {'content-type': 'JSON'};

spyOn(object, 'returnResponse');

handleRequest(request, response);

request.emit('data', new Buffer('123'));

request.emit('data', new Buffer('456'));

request.emit('end');

expect(object.returnResponse).toHaveBeenCalledWith(request, response, object.formatResponse);

expect(object.returnResponse.calls.mostRecent().args[0].body).toEqual(new Buffer('123456'));

});

Node.js has a stream called PassThrough (all streams are instances of EventEmitter). It acts as a test dummy that can be used to emit ‘data’ and ‘end’ events. It is used instead of the real request. The last assertions makes sure that the POST buffer is assembled correctly (it also means that the ‘end’ event is emitted).